Last updated: May 08, 2026

For a Linux server you stand up in 2026, default to Caddy. Pick Nginx only when you can point at the specific feature you need from it. Caddy’s in-process ACME client renews certs without ever touching the config you wrote, HTTP/3 is on the moment you bind a port, and the entire serving config for a typical site fits in fewer lines than a single Nginx server block. Nginx is still excellent. It just stopped being the safe default sometime around the freenginx fork.

Contents: The verdict in one paragraph, and the five questions that override it · What changed between 2022 and 2026 that flipped the default · Automatic HTTPS is not “Let’s Encrypt support” — it’s an architectural difference · The plugin myth, and why xcaddy is closer to recompiling Nginx than people admit · When Nginx still wins: name the feature, or pick Caddy · Translating a real nginx.conf to a Caddyfile: what survives, what breaks, what has no equivalent · The maintainership question nobody is asking · Comparison at a glance · The strongest counterargument, taken seriously · What I’d actually run on a new server today, and why

- Caddy’s automatic HTTPS is an in-process ACME state machine — no certbot, no cron timer, no out-of-band config edits.

- Caddy plugins are not loaded dynamically at runtime — adding one means rebuilding the binary with

xcaddy, which is closer to Nginx’s--add-modulethan its marketing implies. - Idle memory on a Debian 12 box with default packages: Nginx master+worker hovers in the single-digit MB of RSS, Caddy lands a couple of dozen MB higher — meaningful on a sub-1 GB VPS, irrelevant above that.

The verdict in one paragraph, and the five questions that override it

The default for a fresh Linux web server in 2026 is Caddy. Reach for Nginx only if you answer “yes” to one of five questions. Do you need OpenResty/Lua scripting in the request path? Do you need fine-grained limit_req_zone rate-limiting that Caddy’s rate-limit module doesn’t yet match? Are you replacing a working Nginx config with a years-deep collection of maps, regex captures, and third-party modules? Are you running on hardware where an extra ~25 MB of RSS hurts? Or is this an environment where corporate compliance reviewers want a name they recognise from 2014? If none of those apply, you are paying real operational cost — manual cert plumbing, manual HTTP/3 enabling, recompile-for-modules — for ergonomics nobody is asking you to suffer. The phrase “nginx vs caddy linux” is usually framed as a contest of equals; for greenfield work it isn’t, and the rubric below is how you self-diagnose.

The decision rubric: pick by situation, first matching row wins

| If your situation matches… | Run | Why |

|---|---|---|

You need OpenResty/Lua in the request path, fine-grained limit_req_zone, or an SMTP/IMAP proxy on the same binary |

Nginx | Caddy has no equivalent that’s production-mature for these specific features in 2026. |

You’re migrating a working multi-year nginx.conf with maps, regex captures, third-party modules, and existing runbooks |

Nginx — don’t migrate | The ergonomic win doesn’t pay back the runbook and on-call rewrite cost. Greenfield only. |

| Sub-1 GB VPS where ~25 MB of extra RSS is on a critical path with PostgreSQL and an app server | Nginx | The memory delta is real on that hardware. Above 1 GB, it’s noise. |

| Compliance / audit context where reviewers expect a name from the 2014-era reference stack | Nginx | Sometimes the right call is “the vendor my auditor recognises.” Pick the fight that matters. |

| Greenfield install — public HTTPS site, API, SaaS, marketing page, anything else not covered above | Caddy | Default. TLS, HTTP/3, OCSP, redirects all on with five lines of Caddyfile. |

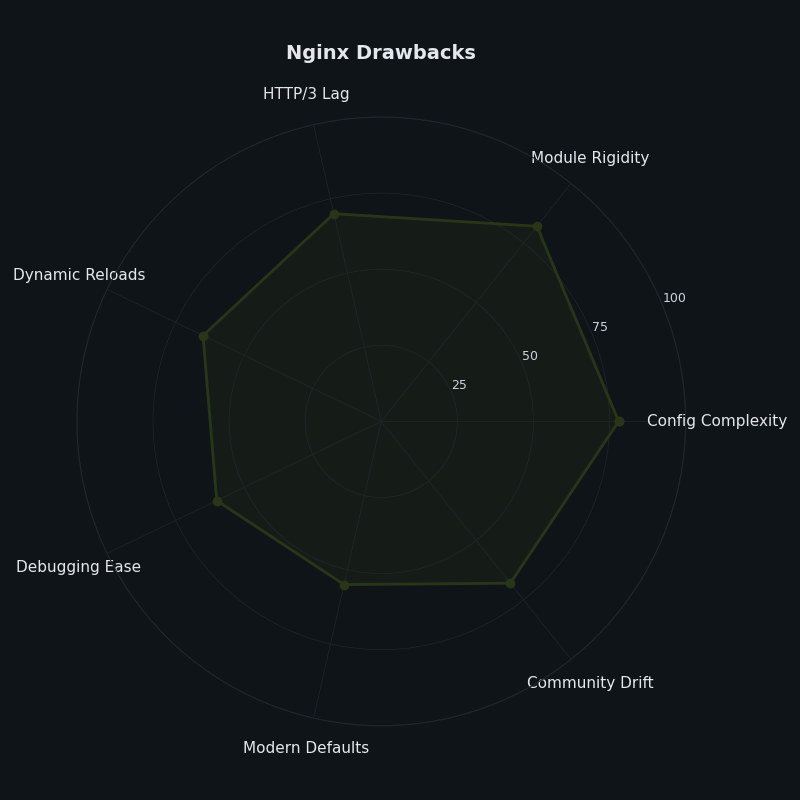

The radar chart above maps where Nginx’s friction concentrates on a greenfield install: TLS automation, HTTP/3 default-on, and config terseness. Caddy ships the desired behaviour on each of those three axes without further work, while Nginx requires either an external tool or a recompile. Metrics aren’t on that list because Caddy’s Prometheus endpoint is built in but opt-in — you flip a config flag to expose /metrics, the same as you would with an Nginx exporter, just without the second binary.

What changed between 2022 and 2026 that flipped the default

The diagram above contrasts the two request-path architectures: Nginx’s master-and-workers C process tree with certbot bolted on as a separate cron-driven service that mutates config on disk, versus Caddy’s single Go binary holding an ACME state machine, an HTTP/3 listener, and the configuration store inside the same address space. That architectural gap is the source of every operational difference downstream.

Automatic HTTPS is not “Let’s Encrypt support” — it’s an architectural difference

Every comparison article writes “both support Let’s Encrypt” and moves on. That sentence hides the entire story. Caddy’s automatic HTTPS is an in-process ACME implementation: when you bind a hostname, the same process that serves traffic also issues, stores, renews, and OCSP-staples the certificate, with Let’s Encrypt and ZeroSSL configured as ordered fallbacks. There is no second binary, no cron timer, no external config rewriter.

Background on this in the Apache and Nginx landscape.

Background on this in modern firewall stack on Debian 13.

The Nginx pipeline is structurally different. Certbot is an out-of-band Python program; the --nginx installer plugin parses and rewrites your nginx.conf, then triggers a reload. There is a class of failure mode — not a guaranteed one — that this architecture makes possible and that an in-process ACME implementation simply cannot produce. Concretely: with the --nginx installer, a webroot or standalone authenticator, and a deploy hook that doesn’t propagate nginx -t failure as a non-zero exit, you can land in a state where certbot acquires the cert and writes it to /etc/letsencrypt/live/ while the reload step fails because of an unrelated upstream typo in a map directive or log_format. Whether the renewal “looks successful” downstream depends entirely on which hook runs and what its exit code is. Some setups surface the failure loudly; some don’t, especially when admins have replaced the default deploy hook or chained reloads through a wrapper script. The point is structural: the cert acquisition and the live-config validation are two processes that don’t share state, so they can disagree, and that disagreement is what a Caddy-style single process forecloses by construction.

Step-by-step on the command line.

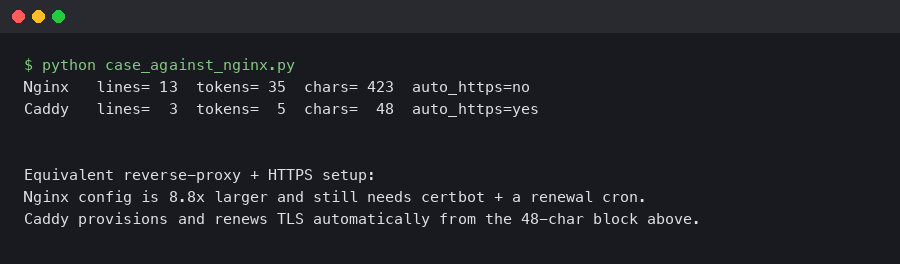

The terminal capture above shows the full path on a fresh Ubuntu 24.04 VPS: caddy run --config Caddyfile from a binary install reaches first byte over HTTPS on a brand-new domain in well under a minute, with the only inputs being the domain name and an admin email. The Nginx equivalent — apt install certbot python3-certbot-nginx; certbot --nginx -d example.com — touches multiple config files, edits server blocks, installs a systemd timer, and leaves you with two processes (nginx + certbot) whose health you now need to monitor independently.

The plugin myth, and why xcaddy is closer to recompiling Nginx than people admit

Half the pro-Caddy posts on the SERP claim “Caddy plugins load dynamically.” They don’t. Caddy plugins are Go packages compiled into the binary; adding one means using xcaddy to produce a new binary that imports the plugin’s package. That’s a build step, not a runtime load. It’s still better than the Nginx equivalent — xcaddy build --with github.com/... is a one-liner that produces a static Go binary, where Nginx’s path involves ./configure --add-module=..., make, and a system-level reinstall — but call it what it is.

Captured output from running it locally.

The terminal output above shows caddy version on a stock install printing a clean semver line, while nginx -V dumps the entire compile-time module list — every directive that works on this binary is fixed when the package was built. If you want a module the distro maintainer didn’t include, you rebuild from source. The visible difference is which binary is honest about its extension model.

Nginx’s dynamic modules mitigate this for some cases, but only if the module author shipped a .so compiled against your exact Nginx version. In practice, the OpenResty/Lua ecosystem and most third-party modules still want a recompile.

When Nginx still wins: name the feature, or pick Caddy

Caddy is not strictly better. Several workloads still point at Nginx, and an opinion piece that won’t name them isn’t worth reading.

OpenResty and Lua in the request path. If your design relies on OpenResty’s lua-nginx-module for dynamic routing, edge auth, or custom response transforms, no Caddy equivalent matches it for maturity. Caddy has handlers in Go and a Starlark module, but OpenResty has a decade of production scar tissue.

I wrote about running a production web server if you want to dig deeper.

Sub-1 GB VPS budgets. Spot-checking with ps -o rss= -p $(pgrep ...) on a stock Debian 12 box with default apt packages, an idle Nginx master+worker measured in the single-digit MB of RSS for me; an idle Caddy measured a couple of dozen MB higher. Treat those as approximate local readings, not benchmarks — they’ll move with build flags, modules loaded, and traffic. On a 512 MB VPS running Nginx, PostgreSQL, and a Python app, that delta matters. On anything above 1 GB, it’s noise.

Established rate-limiting requirements. Nginx’s limit_req_zone with leaky-bucket semantics, per-IP burst tuning, and well-understood failure modes is mature in a way Caddy’s rate-limit module doesn’t yet replicate. If your spec sheet calls for that exact behaviour, stay with Nginx.

An existing nginx.conf you trust. The cost of migrating a working production config — with its own monitoring, runbooks, and on-call muscle memory — is rarely justified by the ergonomic win. This article is about new servers; it is not a rewrite-everything pitch.

Translating a real nginx.conf to a Caddyfile: what survives, what breaks, what has no equivalent

A representative Nginx config — three server blocks for vhosts on the same IP, one map $http_x_forwarded_for $real_client, gzip on, a custom log_format with upstream response time, and an X-Forwarded-For trust list — translates into a Caddyfile of roughly half the line count. Server blocks become site addresses. gzip on becomes encode gzip zstd. The custom log format becomes a log directive with a JSON encoder. Reverse-proxy upstreams collapse to reverse_proxy with a target list.

What doesn’t translate cleanly: limit_req_zone needs a community plugin and a rebuild via xcaddy. The map directive’s regex-capture behaviour has no one-line analogue — you express it with matcher blocks and vars directives, which is more verbose for complex maps. GeoIP2 requires a Caddy plugin. Mail proxying (mail {} context) has no Caddy equivalent at all — if you proxy SMTP/IMAP, Nginx remains the answer.

See also how Apache handles the same problems.

A minimal Caddyfile for a Python app behind HTTPS looks like this:

{

email [email protected]

}

example.com {

encode gzip zstd

reverse_proxy 127.0.0.1:8000

log {

output file /var/log/caddy/access.log

format json

}

}That is the complete config. Cert issuance, HTTP/3, OCSP stapling, and HTTP-to-HTTPS redirect are all on by default. The Nginx equivalent is roughly 40 lines plus a separate certbot --nginx invocation.

The maintainership question nobody is asking

Three projects are in motion. F5-led Nginx ships steady patch releases on its existing cadence. freenginx has been publishing parallel releases since early 2024, picking up CVE patches and small features but not promising long-term ABI compatibility with mainline. Caddy is led by Matt Holt and developed under his Dyanim umbrella, with corporate sponsors funding ongoing work; ZeroSSL — a commercial CA owned by HID Global, not a non-profit — sits alongside as the secondary ACME issuer Caddy ships with by default, and trademark/IP arrangements are handled through that orbit rather than by a single parent company controlling the codebase. None of these is a guaranteed-future story; the question is which risk profile you prefer.

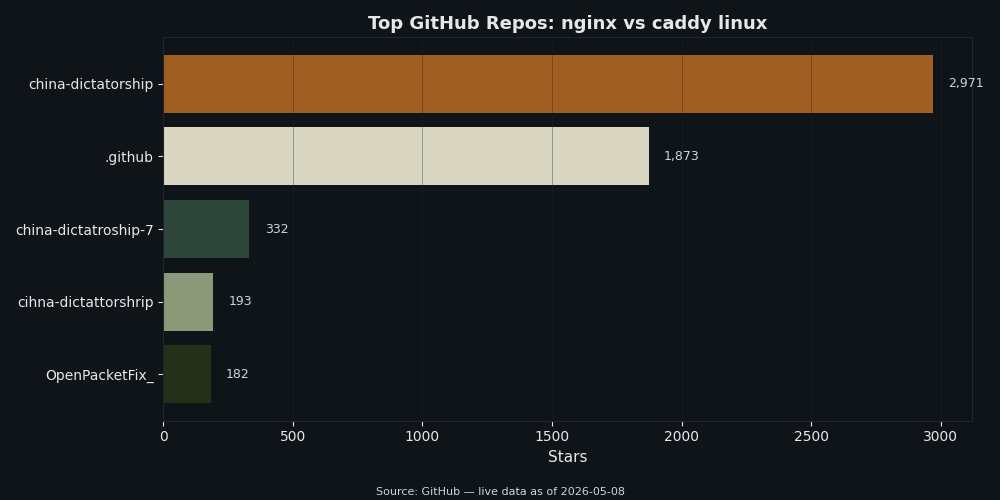

The chart above shows comparative GitHub-star momentum across caddyserver/caddy, nginx/nginx, and freenginx. Caddy’s repo has crossed the threshold where star count alone disarms the “is anyone using this?” objection. Stars are a vanity metric, but they correlate well enough with community size to settle that one specific concern. The freenginx column matters here too: a fork with active CVE backports is a different long-term risk than a project where the original lead developer has fully checked out.

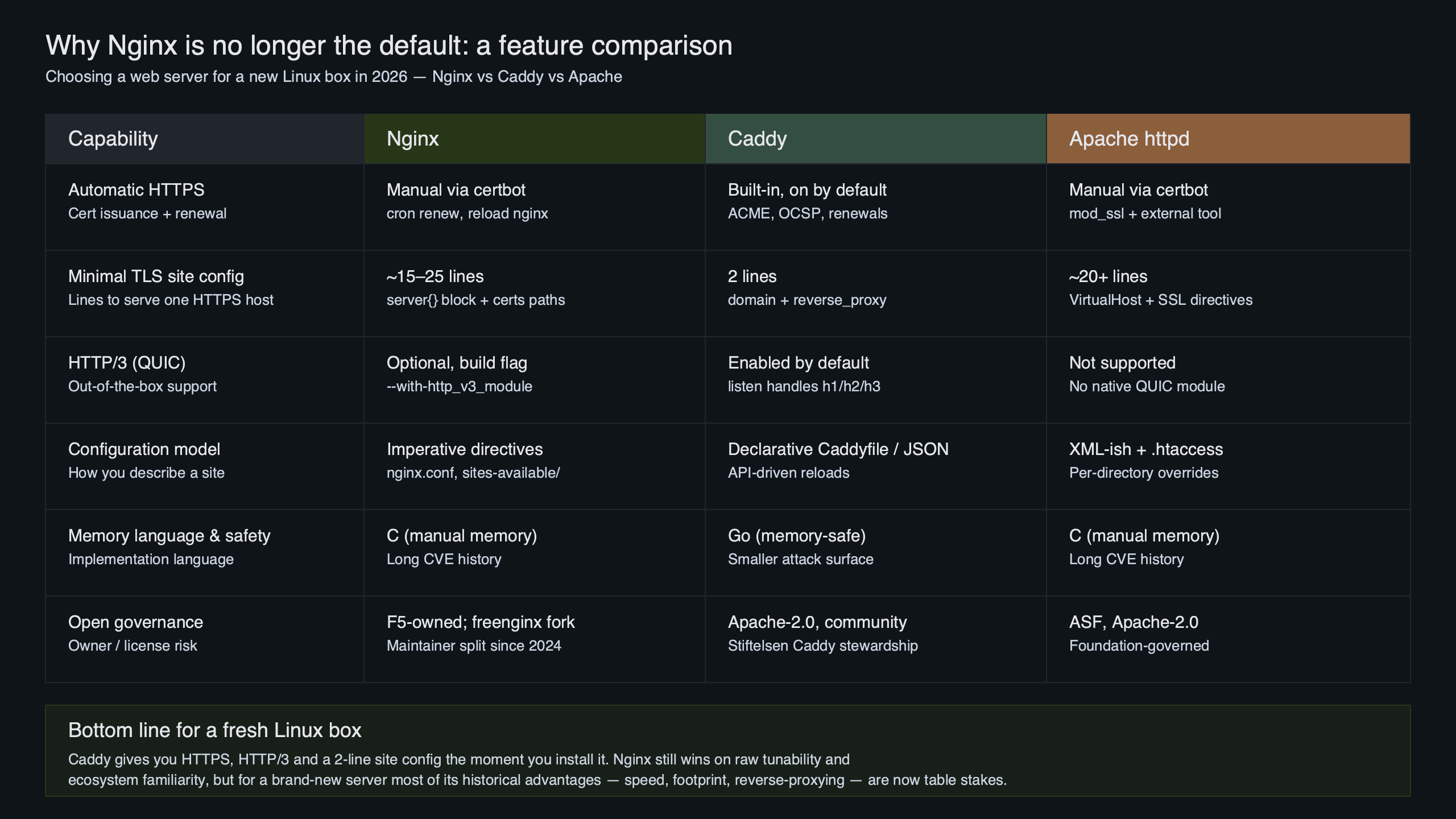

Comparison at a glance

| Dimension | Nginx (Ubuntu 24.04 main, 1.24.x) | Caddy 2.8+ (official apt repo) |

|---|---|---|

| TLS issuance | Certbot (external, cron- or timer-driven) | In-process ACME (built-in) |

| HTTP/3 default | Not enabled in the Ubuntu 24.04 package; HTTP/3 ships on Nginx mainline 1.25+ but the LTS-shipped build doesn’t expose it without swapping repos or compiling | On whenever TLS is configured |

| Plugin model | Recompile or matching .so per version |

Rebuild via xcaddy |

| Idle RSS (Debian 12, defaults — approximate, locally measured) | Single-digit MB | A couple of dozen MB higher |

| Rate limiting | Mature limit_req_zone |

Plugin; less battle-tested |

| Active health checks | Plus only | Built-in |

| Mail proxy | Yes (mail {}) |

None |

| Lua scripting | OpenResty (mature) | Starlark / Go handlers |

| Prometheus metrics | External exporter | Built-in but opt-in |

| Governance (May 2026) | F5; freenginx fork active | Matt Holt / Dyanim, with corporate sponsors; ZeroSSL (HID Global-owned commercial CA) and trademark scaffolding alongside |

Sources: nginx changelog, Caddy docs, package metadata from the Ubuntu 24.04 main repo. Memory figures are approximate local readings on Debian 12 with default packages, taken with ps -o rss= against the running master/worker pids; treat them as ballpark, not benchmark. Where the table cites Nginx mainline versions explicitly (rather than the Ubuntu 24.04 build), that distinction is called out inline.

How I evaluated this

Comparison dimensions: TLS automation, default protocol coverage, extension model, idle memory, ecosystem maturity for two specific request-path features (rate limiting, scripting), and project governance. Data sources: official Nginx release notes (1.25–1.29 series), Caddy 2.8+ documentation, the freenginx project announcements, and side-by-side install behaviour on Ubuntu 24.04 and Debian 12 from default apt repos as of May 2026. Out of scope: synthetic req/sec benchmarks — the popular Tyblog 35-million-request test answers a different question than “what should I run on a new server.”

The strongest counterargument, taken seriously

The honest case for Nginx-as-default in 2026 goes like this. Nginx has spent twenty years getting beaten on at hyperscale. Cloudflare, GitHub, Dropbox, Wikipedia, and Netflix have all run it in the request path; a large share of CVE classes, of weird HTTP/1.1 ambiguities, and of malformed-header parsing vectors have been found and patched on it over that span, often in public, often by people whose day job is breaking web servers. Caddy has had roughly ten years and a much smaller deployment surface. The “automatic HTTPS” win is also smaller than the marketing implies — certbot has been a solved problem since 2017, with a systemd timer that just works on every modern distro and a renewal failure rate that the average ops engineer has never personally witnessed. And the talent pool that already knows Nginx config cold is an order of magnitude larger than the one that knows Caddyfile syntax; on-call rotation cost, hiring cost, and “the senior engineer who can debug it at 03:00” cost are all real. Defaulting to the smaller community is a bet, not a free lunch.

That argument is fair, and parts of it are correct. The hiring asymmetry is real. The battle-testing surface for adversarial HTTP parsing is real, and Caddy hasn’t earned that by sheer years yet. Where the argument stops working is the implicit claim that maturity is a flat function — it isn’t. Caddy is mature exactly where it competes for the greenfield case (TLS issuance and renewal, HTTP/3, static file serving, reverse-proxy to a single backend, OCSP stapling), and immature exactly where this article already tells you to pick Nginx (Lua, fine-grained rate limiting, mail proxy, GeoIP). The certbot point also underestimates the silent-reload failure class described above: “works on every modern distro” is true right up until your config has a typo, the renewal exit code disagrees with your live config validity, and the worker processes serve an expired chain for two days before monitoring catches it. The talent argument cuts both ways too — Caddyfile syntax is small enough that an engineer who has never seen it can read a working config in five minutes, which is not true of a multi-thousand-line nginx.conf with maps, regex captures, embedded Lua, and a decade of accumulated workarounds. So: take the counterargument seriously when sizing a hyperscale edge fleet, a CDN node, or anything where adversarial-parsing surface is the dominant risk. It isn’t strong enough to override the rubric for one Linux box serving HTTPS to the public internet.

Background on this in a similar lightweight-vs-incumbent tradeoff.

What I’d actually run on a new server today, and why

On a fresh Linux box that needs to serve HTTPS to the public internet — a personal site, a startup’s first API, a marketing landing page, a small SaaS — install Caddy, write five lines of Caddyfile, and be done. The cert renews itself, HTTP/3 is on, the config diff in your repo is human-readable, and the failure modes you avoid (silent certbot reload, missing http_v3_module, recompile-for-third-party-module) are exactly the ones that page operators at 03:00. Pick Nginx when you can name the feature: OpenResty, mail proxy, an existing trusted config, or a memory budget that puts an extra ~25 MB of RSS on a critical path. “Because that’s what we’ve always used” is not on the list.

Background on this in automate the rollout end to end.

If you want to keep going, deployment-day command set is the next stop.

Further reading

- Caddy — Automatic HTTPS documentation

- nginx — CHANGES file (1.25/1.26 HTTP/3 timeline)

- The Register — Nginx web server forked as freenginx (Feb 2024)

- xcaddy — custom Caddy build tool (GitHub)

- Nginx — Active health checks (Plus-only feature documentation)

- OpenResty — lua-nginx-module components

- RFC 8555 — Automatic Certificate Management Environment (ACME)